Why Data Centers Are Not One System. They Are Systems of System

Modern data centers are often described in singular terms, as if they operate as one unified machine. In practice, they are far more complex. A data center is an ecosystem of tightly coupled systems, where power, cooling, monitoring, and infrastructure continuously interact. Each layer has its own dependencies, failure modes, and operational constraints. The reality is that uptime and reliability are not delivered by any single component, but by how well these systems function together under stress, load, and change.

Understanding Data Centers as Systems of Systems

At a foundational level, data centers are built on interconnected systems rather than isolated infrastructure. Power infrastructure, cooling systems, and IT environments operate in parallel but are deeply interdependent. The performance of one system directly influences the others, creating a dynamic operational environment where coordination is critical.

Why a Single-System Mindset Fails

Treating a data center as a single system leads to blind spots. A failure in electrical supply can cascade into cooling disruption, which then impacts server performance and uptime. Downtime rarely originates from a single failure point. It is often the result of misaligned systems or insufficient redundancy across layers.

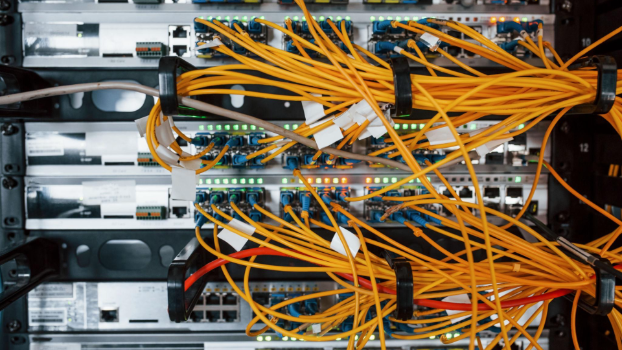

Core Layers of Interconnected Systems

The core layers include electrical systems delivering power, cooling systems managing heat and temperature, and monitoring platforms such as DCIM providing visibility. These layers are supported by environmental sensors, control logic, and operational workflows that tie everything together. Without alignment across these systems, reliability quickly degrades.

Power Infrastructure: The Backbone of Reliability

Power is the backbone of any data center. Without a resilient power infrastructure, no amount of advanced IT hardware or cooling optimization can maintain uptime. The goal is not just to supply power, but to ensure continuous, uninterrupted delivery under all conditions.

Power Generation and Backup Systems

Backup systems such as backup generators and UPS units are critical for maintaining continuity. UPS systems provide immediate power during outages, bridging the gap until generators come online. These layers are designed to handle failover events seamlessly, preventing even momentary disruption.

Power Distribution Architecture

Power distribution is where complexity increases. Switchgear, transformers, and distribution paths must be carefully designed to avoid single points of failure. Redundant pathways ensure that power can be rerouted instantly, supporting high levels of reliability.

Transfer and Switching Mechanisms

Automatic transfer switches and static transfer switches enable rapid failover between power sources. These components are essential in mission-critical environments, where even milliseconds of interruption can have cascading effects. Their role is to maintain continuity without human intervention.

Redundancy Models and Uptime Engineering

Redundancy is not a feature. It is a strategy embedded across every layer of the data center. It directly determines uptime and operational resilience.

Common Redundancy Configurations

Configurations such as N+1, 2N, and 2N+1 define how systems are duplicated to ensure availability. N+1 provides a buffer for single failures, while 2N offers complete duplication of infrastructure. Each model balances cost against reliability, depending on business requirements.

Uptime Institute Standards and Tier Design

The Uptime Institute defines tiers that classify data centers based on redundancy and fault tolerance. Higher tiers require concurrently maintainable systems, allowing maintenance without downtime. This standard reflects the importance of system-wide redundancy rather than isolated backups.

Failover and Resiliency Design

Failover mechanisms are designed to respond automatically to failures. Power resiliency depends on how quickly and effectively systems can recover. True resilience comes from layered redundancy, where multiple systems support each other during disruptions.

Cooling Systems: Managing Heat and Thermal Load

Cooling is often underestimated, yet it is just as critical as power. Without effective cooling systems, heat buildup can compromise hardware and reduce reliability.

Traditional Cooling Architecture

HVAC systems, air handlers, and hot aisle/cold aisle containment strategies are used to manage airflow and temperature. These designs optimize cooling efficiency while maintaining stable operating conditions.

Advanced Cooling Technologies

As workloads become denser, traditional cooling methods are supplemented with liquid cooling loops and coolant distribution units (CDUs). These systems are more efficient at removing heat, particularly in high-performance environments.

Thermal Load and System Interaction

Thermal load is directly linked to power consumption. As power usage increases, so does heat generation. Cooling systems must scale accordingly, creating a tight dependency between power and cooling infrastructure.

Monitoring, Control, and Operational Intelligence

Visibility across systems is essential for maintaining reliability. Monitoring enables proactive management rather than reactive response.

DCIM and Infrastructure Monitoring

DCIM platforms integrate data from power, cooling, and environmental systems. They provide a unified view of infrastructure performance, enabling operators to identify risks before they escalate.

Environmental Sensors and Data Collection

Environmental sensors track temperature, humidity, and airflow. These inputs are critical for maintaining optimal conditions and preventing failures caused by environmental fluctuations.

Capacity Planning and Optimization

Capacity planning ensures that infrastructure can handle future demand. It involves balancing load, efficiency, and redundancy to avoid overutilization or underperformance.

Efficiency Metrics and Sustainability

Efficiency is no longer optional. It is a key driver of both operational cost and environmental impact.

Power Usage Effectiveness (PUE)

PUE measures how efficiently power is used within a data center. Lower PUE values indicate better efficiency, with more power reaching IT equipment rather than being lost to overhead systems.

Carbon Usage Effectiveness (CUE)

CUE evaluates the environmental impact of energy consumption. It reflects how sustainable a data center is, based on its carbon footprint.

Sustainable Infrastructure Design

Sustainability involves optimizing both power and cooling systems. This includes reducing waste, improving efficiency, and integrating renewable energy where possible.

System Interdependencies and Failure Scenarios

Data centers are defined by their interdependencies. Understanding these relationships is key to preventing downtime.

Power and Cooling Interdependence

Cooling systems rely on power to operate. If power fails, cooling stops, leading to rapid temperature increases. This interaction highlights the importance of coordinated system design.

Common Causes of Downtime

Downtime often results from power failures, cooling inefficiencies, or inadequate redundancy. Human error and poor monitoring can also contribute to system failures.

Designing for Reliability and Resilience

Reliability is achieved by eliminating single points of failure and implementing layered redundancy. Regular testing and validation ensure that systems perform as expected under real conditions.

Future Trends in Data Center Systems Design

Data centers are evolving to meet increasing demand and complexity. This evolution is driving innovation across all systems.

High-Density and AI Workloads

AI workloads are increasing power consumption and thermal load. This requires more advanced cooling systems and stronger power infrastructure.

Intelligent Automation and Smart Infrastructure

Automation is transforming how data centers operate. AI-driven monitoring and predictive maintenance improve efficiency and reduce risk.

Next-Generation Power and Energy Systems

Emerging technologies such as energy storage, microgrids, and enhanced power resiliency are shaping the future of data center design.

Conclusion: Designing for a System of Systems Future

Data centers are not defined by individual components, but by how systems interact. Power, cooling, monitoring, and infrastructure must operate as a cohesive whole. Redundancy, efficiency, and sustainability are no longer isolated goals. They are interconnected outcomes of well-designed systems. As demand continues to grow, the ability to design and manage these systems as an integrated ecosystem will define the next generation of reliable, high-performance data centers.