Cooling Systems: The Silent System That Keeps Data Centers Alive

Modern data centers are engineered ecosystems where power and heat exist in constant tension. Every watt consumed by compute becomes heat that must be removed with precision. Cooling systems operate quietly behind the scenes, yet they are inseparable from uptime, reliability, and performance. As infrastructure shifts toward high-density workloads driven by AI and machine learning, cooling is no longer a background utility. It is a strategic layer that determines whether systems remain stable or fail under pressure. The real sophistication lies not just in removing heat, but in doing so efficiently, redundantly, and intelligently.

Understanding the Role of Cooling in Data Centers

Cooling is fundamentally about controlling thermal load while preserving system integrity. Servers, storage, and power distribution components generate continuous heat, and without controlled temperature, airflow, and humidity, performance degradation begins almost immediately. Standards such as ASHRAE define acceptable environmental ranges, but in practice, operators design well within those limits to maintain stability.

Cooling also directly supports uptime. Even minor fluctuations can cascade into downtime if not managed in real time. In modern facilities, cooling is tightly coupled with electrical architecture, ensuring that thermal conditions remain stable across varying loads.

Why Cooling is Critical for Uptime and Reliability

Uptime is not achieved through a single system but through layers of redundancy. Cooling plays a central role in this resilience strategy. Configurations such as N+1 and 2N ensure that even if one cooling component fails, others can absorb the load without disruption.

In practice, this means redundant chillers, backup CRAC units, and distributed airflow systems that prevent localized overheating. Cooling failures are rarely isolated. They often trigger cascading issues across power and compute layers. Designing for redundancy minimizes that risk and ensures continuous operation.

Environmental Control: Temperature, Humidity, and Airflow

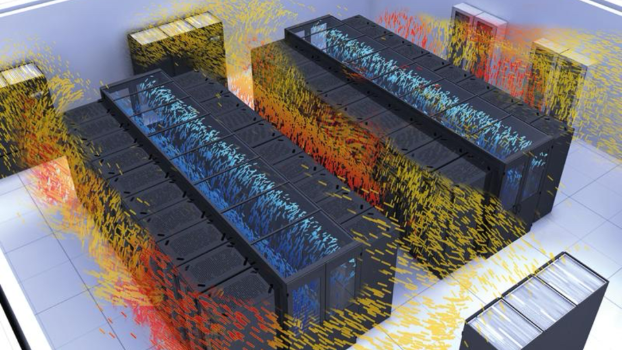

Effective environmental control is about balance. Temperature must remain within a narrow range to protect hardware, while humidity must be controlled to prevent both condensation and electrostatic discharge. Airflow management is equally critical, often achieved through hot aisle and cold aisle containment strategies.

These elements work together to maintain consistent operating conditions. Poor airflow design, for example, can create hotspots even when overall temperature appears stable. Precision in environmental control extends equipment lifespan and reduces unexpected failures.

Core Cooling Architectures in Modern Data Centers

Cooling architectures have evolved alongside compute density. What once relied heavily on air cooling now incorporates advanced methods designed to handle significantly higher thermal loads.

Air Cooling Systems and Their Limitations

Air cooling remains the foundation of many facilities, particularly those with moderate density. CRAC systems circulate conditioned air, using controlled airflow to dissipate heat. This approach is proven and widely understood.

However, as rack densities increase, air cooling alone becomes less effective. The ability of air to carry heat is limited, and beyond certain thresholds, maintaining temperature stability becomes inefficient and energy intensive.

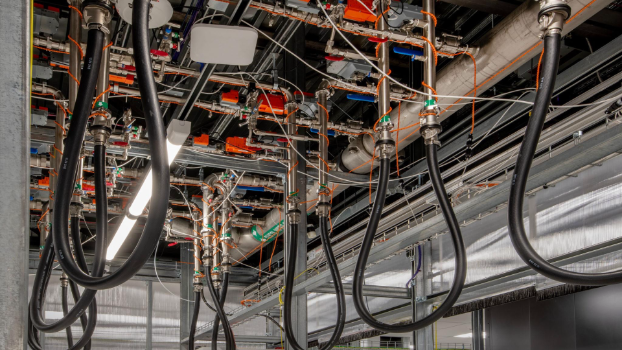

Liquid Cooling Technologies for High-Density Environments

Liquid cooling addresses these limitations by leveraging the superior heat transfer properties of liquids. Direct-to-chip systems deliver coolant directly to heat-generating components, significantly improving efficiency.

CDU systems regulate and distribute coolant, ensuring stable operation across racks. This approach reduces reliance on high-volume airflow and allows facilities to support high-density workloads without excessive energy consumption.

Immersion Cooling and Next-Generation Approaches

Immersion cooling represents a more radical shift. Hardware is submerged in thermally conductive fluid, allowing heat to be removed directly and uniformly. This method is particularly effective for extreme high-density and AI-driven environments.

While adoption is still emerging, immersion cooling offers clear advantages in heat rejection efficiency and system simplicity. It eliminates many airflow challenges associated with traditional designs.

Hybrid Cooling Models for Flexible Infrastructure

Hybrid cooling combines air and liquid systems, allowing operators to match cooling methods to workload requirements. This flexibility is increasingly valuable as facilities host mixed-density environments.

Hybrid models enable gradual transition, supporting legacy systems while introducing advanced cooling where needed. They provide a practical pathway toward more efficient infrastructure without full redesign.

Heat Management and Thermal Engineering Principles

At its core, cooling is about managing heat flow. Every system is designed around the principle of moving heat away from critical components as efficiently as possible.

Understanding Thermal Load and Heat Rejection

Thermal load represents the total heat generated within a facility. Effective heat rejection ensures that this energy is transferred out of the system, typically through chillers, cooling towers, or external heat exchange systems.

Efficiency at this stage directly impacts overall performance. Poor heat rejection leads to elevated internal temperatures, increasing strain on cooling systems and reducing reliability.

Designing for High-Density Workloads

High-density environments amplify these challenges. AI and machine learning workloads concentrate significant power into smaller physical spaces, increasing thermal load per rack.

This shift requires more localized cooling strategies, often at the rack or component level. Traditional room-based cooling is no longer sufficient, pushing the industry toward liquid and hybrid solutions.

Redundancy, Resilience, and Continuous Operation

Cooling systems are designed with failure in mind. Redundancy ensures that no single point of failure can compromise operations.

Redundancy Models: N+1 and 2N Explained

N+1 redundancy provides one additional unit beyond what is required, offering a balance between cost and reliability. 2N configurations duplicate entire systems, providing maximum resilience.

These models are applied across cooling infrastructure, from chillers to distribution systems, ensuring continuous operation even under failure conditions.

Cooling System Failover and Risk Mitigation

Failover mechanisms automatically shift load to backup systems when issues arise. This process must be seamless to prevent temperature spikes.

Integrated monitoring systems detect anomalies early, enabling proactive intervention. In mission-critical environments, this level of preparedness is essential.

Monitoring, Automation, and Intelligent Cooling

Cooling is no longer static. It is dynamically managed through data and automation.

Real-Time Monitoring and IoT Integration

Real-time monitoring systems track temperature, humidity, and airflow across the facility. IoT sensors provide granular visibility, allowing operators to identify inefficiencies and risks.

This data-driven approach enables precise control, reducing energy waste while maintaining stability.

AI and Machine Learning in Cooling Optimization

AI and machine learning are increasingly used to optimize cooling performance. These systems analyze patterns, predict thermal behavior, and adjust operations in real time.

The result is improved energy efficiency and more responsive cooling strategies, particularly in variable load environments.

Efficiency Metrics and Performance Optimization

Performance is measured through standardized metrics that reflect both operational efficiency and energy usage.

Understanding PUE and Energy Efficiency Metrics

PUE remains the most widely used metric, comparing total energy consumption to the energy used by IT equipment. Lower PUE values indicate higher efficiency.

Cooling systems play a significant role in this metric, often accounting for a large portion of total energy consumption.

Reducing Energy Consumption Through Smart Cooling

Optimizing airflow, improving heat rejection, and implementing advanced cooling technologies all contribute to reduced energy consumption.

Smart cooling strategies align performance with demand, avoiding overcooling and unnecessary energy use.

Sustainable Cooling and Environmental Impact

Sustainability is becoming a central consideration in cooling system design.

Free Cooling and Natural Resource Optimization

Free cooling leverages external environmental conditions to reduce reliance on mechanical systems. This approach can significantly lower energy consumption in suitable climates.

It represents a shift toward integrating natural resources into infrastructure design.

Sustainability Trends in Data Center Cooling

Sustainability initiatives focus on reducing environmental impact through efficient resource use. This includes minimizing water consumption, improving energy efficiency, and reducing carbon footprint.

Cooling systems are at the forefront of these efforts, as they represent a major portion of operational energy use.

Future of Data Center Cooling Systems

The future of cooling is defined by increasing complexity and intelligence.

Cooling for AI and High-Performance Computing

AI workloads continue to push thermal limits, requiring advanced cooling solutions capable of handling extreme density.

Liquid and immersion cooling are expected to play a larger role as these demands grow.

The Shift Toward Intelligent and Autonomous Systems

Automation will continue to evolve, with AI-driven systems managing cooling with minimal human intervention. These systems will optimize performance, predict failures, and adapt to changing conditions.

Conclusion: Cooling as the Backbone of Modern Infrastructure

Cooling systems are no longer passive components. They are active, intelligent systems that sustain every aspect of data center operation. From managing thermal load to ensuring uptime and enabling sustainability, cooling defines the limits of what infrastructure can achieve. As technology advances, its role will only become more critical, shaping the future of digital systems in ways that remain largely unseen but absolutely essential.